CICERO PERSPECTIVE

What to consider

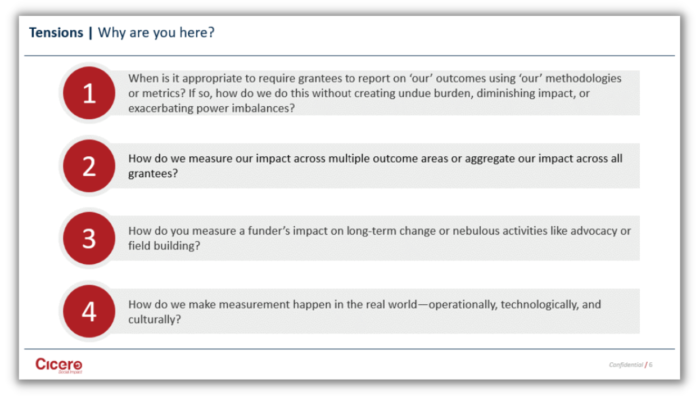

When is it appropriate to require grantees to report on ‘our’ outcomes using ‘our’ methodologies or metrics? If this is ever appropriate, how do we do this without creating undue burden, diminishing impact, or exacerbating power imbalances?

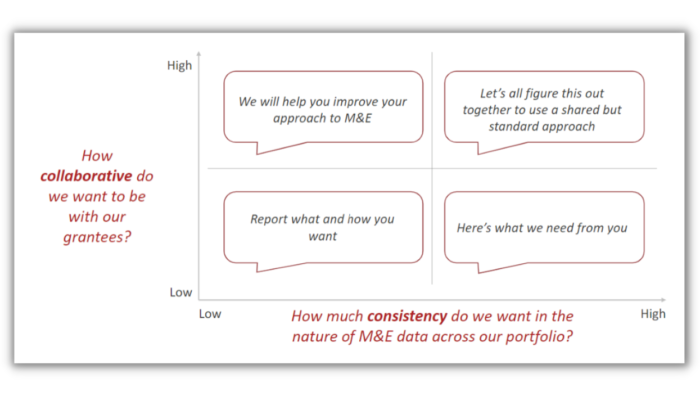

Organizations typically see this as a simple “hands-off” or “hands-on” approach. However, in our experience, we’ve noticed that a more nuanced approach that centers around consistency of the data and collaboration with grantees typically leads to greater impact.

There are three rules of thumb that you can follow when you’re thinking through whether to have grantees report on metrics, how collaborative you want to be, and how consistent you want your data:

-

Avoid extremes. M&E is not an all-or-nothing approach. Rather, you need to determine what’s the rule and what’s the exception for M&E and work to avoid one becoming the other.

-

Ensure M&E benefits everyone. There are multiple stakeholders across your M&E spectrum. Every single person who has touched your M&E process should benefit in some way.

-

Invest in learning and improvement. M&E is a baby step system. Start from where you are, know where you want to go and take small steps to build up to your end goal. It does not need to be an overnight change!

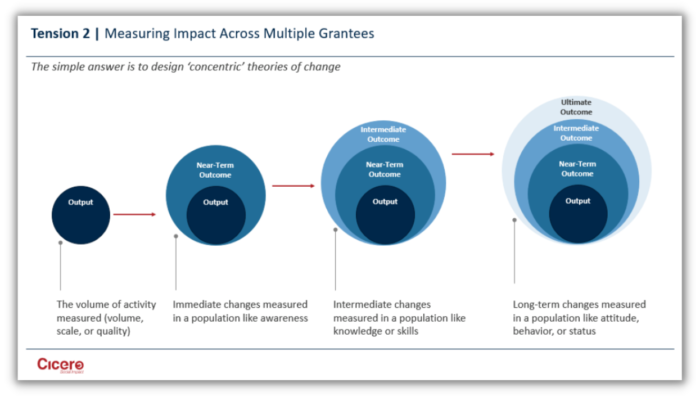

How do we measure our impact across multiple outcome areas or aggregate our impact across all grantees?

Issues get complicated when connecting not just ‘smaller’ and ‘bigger’ things, but different things – aggregating apples and oranges. When you add in multiple areas of focus, many potential outcomes can arise.

To better conceptualize this, let’s think of a funder whose ultimate goal is health equity. Obviously, some metrics associated with health equity might include improved access and improved quality of care. But we also know that secondary factors like education, location, and income can play a major role in health equity. So, while one grantee might report on the number of clinics accessed, another grantee could report on increased 3rd grade reading rates.

When analyzing data, you will look at the collective impact the grantees had on the common metric or outcome to determine if there was improved access across the grants that address these specific metrics. Then you can determine if health equity or access was improved because of these grants. The challenge is to work through that complexity, to try to get to something that is even cleaner and simpler.

There are various keys to success when it comes to measuring across multiple areas:

-

Focus on a small set of ‘big’ outcomes: this allows everything else to be seen as a contributing factor.

-

Gain clarity about what the program areas are and set boundaries between them.

-

Define the ‘equation’ that explains the impact relationship between elements.

-

Track the impact elements that relate to the equation.

-

Develop dynamic statements that enable reporting at different levels of the process.

However, consider the limitations that come with this. Measuring the ultimate outcome can be difficult or impossible so you must be comfortable with broad information. You may need to constantly clarify your tools, processes, and roles along the way. Sometimes you are drawing an M&E boundary when there is not a real-world boundary.

How do you measure a funder’s impact on long-term change or nebulous activities like advocacy or field building?

In a basic theory of change, things tend to get fuzzy if the activities, outcomes, and metrics involved are not clearly outlined and defined. To avoid the “then a miracle occurs” trap, you must start with a robust theory of change that extends all the way to your desired ultimate outcome.

This requires you to be specific about required key areas for the long-term or systems-level change.

-

Be mindful of the types of outcomes that exist: Nature of Change vs. Unit of Change.

-

Be realistic about what you can directly influence and hold yourself accountable for.

-

Measure direct impacts systematically and, when possible, quantitatively. Find ways to measure indirect or longer-term developments as best as possible.

-

Use existing research to bolster the connection between the two sets of outcomes that are beyond your ability to directly measure. This will help tie your outcomes together and paint a bigger, overarching picture that shows cause and effect.

As always, assess, learn, adjust, and refine over time. Be data-driven and strategic as you assess the likelihood of change in and degree of your influence on your ultimate outcomes; adjust your M&E, activities, timeline, and resources accordingly.

How do we make measurement happen in the real world—operationally, technologically, and culturally?

When determining scale to set operational expectations, start with the full picture, but be deliberate about building an MVP vs. ‘the sun, moon, and stars.’ Start with features that will enhance end users’ experience and deliver in small steps.

Once the strategy is set, your next priority is information that will affect near-term management and impact decisions. Remember that software and technology are not solutions—they’re tools. Their usefulness depends on how fit they are for your purpose, how they are used and what goes in and how what comes out is used. These tools need context to be designed and implemented properly: objectives, user context, processes, roles, etc. As with everything new, effective implementation—including change management—matters

M&E is a tool, it’s a means to your end goal. However, it is not the end in itself. I hope for those of you who are more on the operational side of things, that this will help drive you to better results. The systems you build, the integrations you have with GivingData, will be more thoughtful and nuanced so they do create the intended results. M&E is one contributor to our ability to advance our mission. It is one crucial element of driving change in this world.

Start a Conversation

Thank you for your interest in Cicero Group. Please select from the options below to get in touch with us.